Claude Code: Anthropic's new agent harness

Anthropic's new CLI agent is here and it's actually changing how I build Python and ML systems.

Anthropic launched Claude Code about a month ago. I’ve been using it every day since. Most AI assistants live in sidebars or IDE plugins, but those always felt a bit clunky for machine learning work. I usually end up just copy-pasting code from a browser anyway, especially when I’m working on a remote GPU server. Claude Code is different because it lives in the terminal.

Getting Started: The Setup for Python Devs#

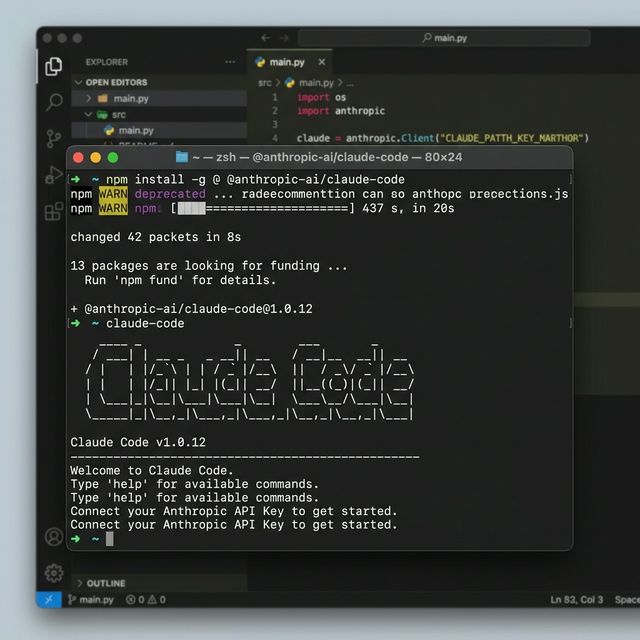

Even if you’re a pure Python developer, you’ll need Node.js to install the tool. It’s a quick global install via npm:

npm install -g @anthropic-ai/claude-code

Once it’s installed, you just run claude in your project directory. The first time you run it, you’ll need to link your Anthropic API key. It opens a browser window for authentication and you’re ready to go.

Connecting to your ML codebase#

When you start a session, Claude Code indexes your files locally. This is a huge benefit for large ML projects with many data scripts and model configurations. It doesn’t send your dataset to the server. It just builds a map of your code so it knows where your training loops and evaluation scripts live.

The indexing process is efficient. It respects your .gitignore file, so it won’t try to index large data folders or virtual environments. This keeps the token usage down and ensures the agent focuses on the code that actually matters.

The First Run: Fixing ML Bugs#

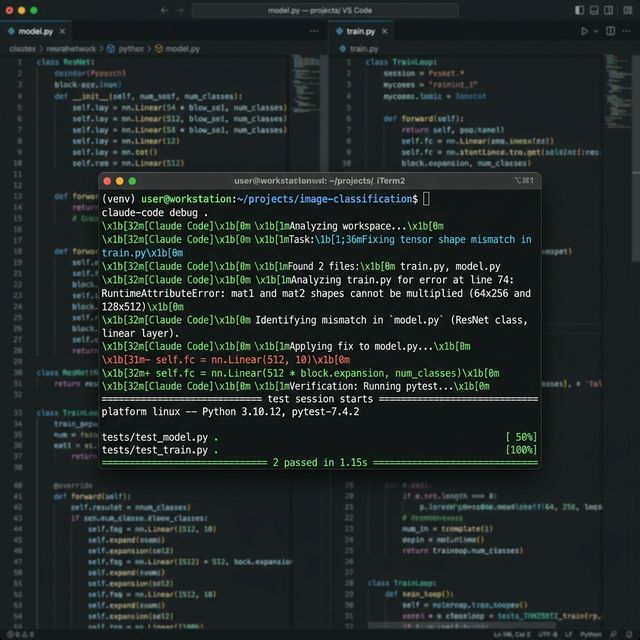

My first real test for Claude Code was a cryptic tensor shape mismatch in a PyTorch training script.

I piped the stack trace into the tool: python train.py 2>&1 | claude "fix this tensor mismatch".

1. Identifying the Culprit#

It didn’t just guess. It searched through model.py and train.py, identified exactly where the linear layer didn’t match the input batch, and explained why the calculation was off. It handles complex error logs well, even when they involve multiple levels of abstraction in your model architecture.

2. The Fix loop#

It proposed a one-line change to the model’s forward pass. But before I could even review it, it asked to run pytest to make sure it didn’t break the shape validation tests I had set up. Watching it plan, execute, and verify the fix feels like having a senior ML engineer sitting next to you who never gets tired of running the same tests over and over.

The agentic loop is key here. It doesn’t just give you a diff; it offers to apply it, test it, and then fix it again if the test fails. This reduces the “try-fail-repeat” cycle that usually consumes a lot of development time.

Deep Dives: Architecture and Data Pipelines#

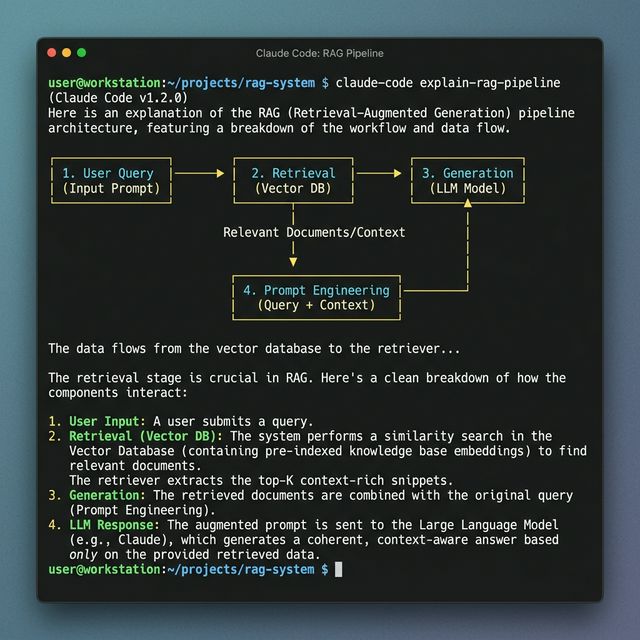

One of the best ways to use Claude Code is for understanding complex AI architectures.

Ask it: claude "explain how the data flows from the vector database to the generator in this RAG pipeline".

Explaining Complex Systems#

It breaks down the retrieval-augmented generation (RAG) process with specific file references. It pointed out exactly where my embeddings were being generated and where the similarity search was being performed. This is way better than standard RAG-based search because the tool has a full, grounded context of your local files.

It can also generate ASCII diagrams or text-based flowcharts to help you visualize the system. This makes onboarding new team members to a complex AI project much faster.

Refactoring Data Scripts#

I used it to refactor a messy data_preprocessing.py script that was heavily using Pandas. I told it to “modernize the script using Polars for better performance.” It didn’t just swap the syntax; it optimized the lazy evaluation chains and verified the output against a sample parquet file.

It handles the boring parts of data engineering like column renaming, type casting, and join optimizations so you can focus on the model logic. It even suggested better ways to handle missing values based on the data patterns it observed in the file.

Why the Terminal Matters for AI Devs#

Most of my training happens on remote servers via SSH. Sidebars and heavy IDE plugins are often laggy or just don’t work in those environments. Since Claude Code is a CLI tool, it works perfectly over a standard SSH connection.

Zero Latency Integration#

You can use it alongside your favorite multiplexers like tmux or screen. This means you can have a training run going in one pane and Claude Code helping you debug a different script in another. It integrates seamlessly into a professional ML workflow without adding any heavy UI overhead.

Advanced Usage: Piping and History#

The real power of a CLI-first tool is the ability to pipe data into and out of it. You can pipe the output of a git diff into Claude to summarize the changes, or pipe the result of a find command to have it refactor specific files.

It also respects your shell history. You can quickly bring up previous tasks and refine them. This makes it a very natural extension of the terminal environment you’re already used to.

The Future: Agents Building Agents#

The real potential here is the shift from “tools” to “teammates.” We’re moving toward a world where the terminal is a command center for specialized agents. Claude Code is the first one that actually understands the messy, non-linear way that actual machine learning development happens.

It’s still in beta and it will eat your tokens if you aren’t careful. But for the repetitive debugging and refactoring that usually kills my productivity by the afternoon, it’s a lifesaver. It bridges the gap between high-level reasoning and low-level execution in a way that feels very practical for day-to-day AI engineering.

Helpful Links:#

- Claude 3.7 Sonnet for Developers ↗

- Claude Code ML Examples ↗

- PyTorch Documentation ↗

- Anthropic Developer Console ↗